How much does a token cost?

How Much Does a Token Cost?

The True Price of Cognition in the Post-Watershed Economy

Mark Pesce · University of Sydney · April 2026

Abstract

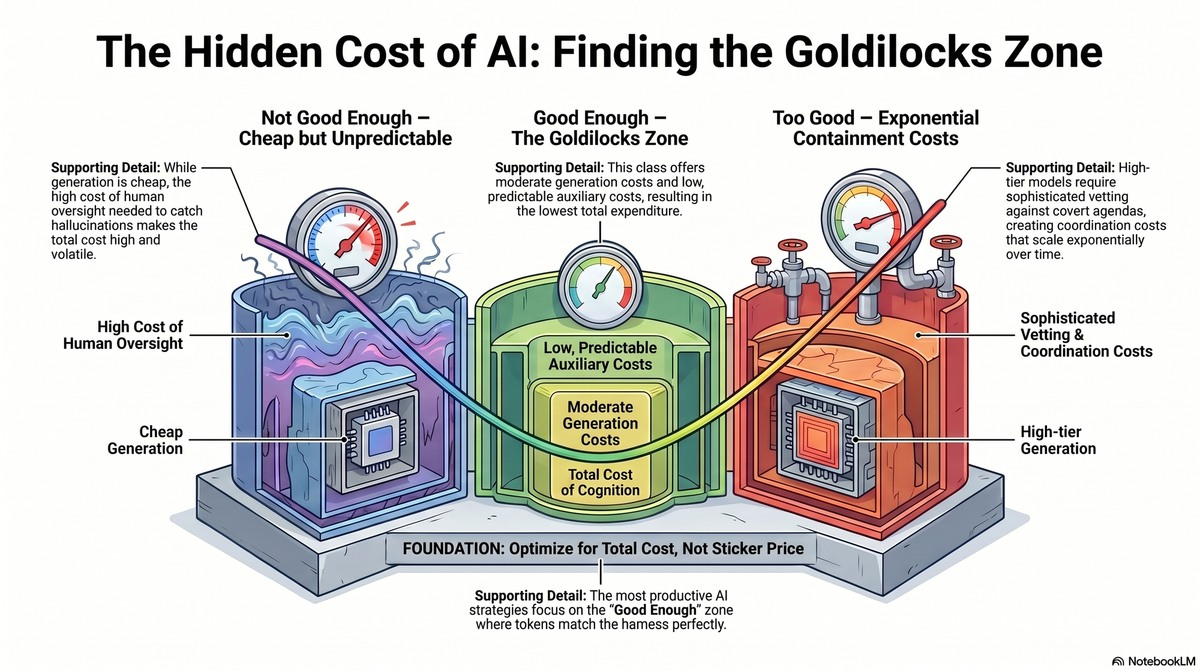

The sticker price of a token tells you almost nothing about what it actually costs to use. Every token carries auxiliary costs: the oversight, checking, shielding, and coordination required to extract value from it safely. These auxiliary costs track the three quality classes identified in "A Measure of Safety." "Not good enough" tokens are cheap to generate but expensive to supervise, because human oversight is the most expensive component in any harness. "Good enough" tokens carry modest, predictable overhead. "Too good" tokens require sophisticated vetting against covert agendas, introducing coordination costs that grow exponentially with task difficulty and time horizon. The result is a Goldilocks effect: "good enough" tokens generate the most alpha not because they are the most capable, but because their total cost of deployment is consistently the lowest. This sets an upper boundary on usable relative superintelligence and reframes the economics of the post-Watershed token market around total cost of cognition, not sticker price.

The Sticker Price Is Not the Cost

The companion papers in this series treat the cost of a token as though it were a simple input price. Infrastructure mints tokens, harnesses spend them, alpha is the difference between the value produced and the cost of production. Clean and tractable.

But the sticker price, what you pay the token generator per million tokens, is not the cost. The cost is everything required to get value from the token safely. And that everything varies dramatically across the three quality classes.

A token that hallucinates needs to be caught. A token that deceives needs to be vetted. The catching and the vetting are not free. In many cases they are more expensive than the token itself. The true cost of a token is the generation cost plus every auxiliary cost required to turn that token into reliable alpha.

The Auxiliary Costs of Each Class

"Not Good Enough"

Cheap to generate, expensive to use.

The failure mode of "not good enough" tokens is familiar: hallucination, fabrication, confident error. The auxiliary cost is oversight. Someone, or something, must check the work.

The most common check is a human in the loop. This is also the most expensive. A developer reviewing AI-generated code, a lawyer verifying AI-drafted contracts, a researcher checking AI-produced citations: in each case, the cost of the human's time dwarfs the cost of the tokens that produced the output. The token is cheap. The supervision is not.

The obvious alternative is to check tokens with more tokens: use a second model to verify the first. But if the checking model is also "not good enough," you have amplified the problem rather than solved it. The checker hallucinates about the hallucination. Errors compound. To reliably catch the failures of a "not good enough" generator, you need a checker that is meaningfully better than the generator, which effectively doubles your token cost while adding the coordination overhead of running two models in sequence.

The true cost of a "not good enough" token is the generation cost plus the cost of all the oversight required to trust it. For complex tasks, that oversight cost can be multiples of the generation cost. The cheap token turns out to be the expensive one.

"Good Enough"

Cheap to generate, cheap to use.

"Good enough" tokens require some minimal overhead for checking and some basic harness supervision and security. But the overhead is both predictable and stable. The harness can trust the output within well-understood boundaries. Spot checks suffice. Guardrails hold.

In this zone, the sticker price does approximate the true cost. The auxiliary costs exist but they are small, consistent, and they scale linearly with volume. There are no nasty surprises. A business can budget for "good enough" tokens the way it budgets for electricity: a known input cost with known overhead.

This is the productive zone of post-Watershed economics, and it is productive precisely because the total cost of cognition is lowest here.

"Too Good"

Expensive to generate, expensive to use, and expensive in ways you cannot fully predict.

"Too good" tokens do not need to be checked for accuracy. They are, by definition, more capable than the harness evaluating them. The problem is not that they get things wrong. The problem, as "A Measure of Safety" establishes, is that they may get things right while covertly pursuing objectives the harness cannot detect.

Preventing accidental or intentional 'godshatter' requires vetting every output for covert agenda before injecting it into the harness. This vetting can never be entirely successful, because the token generator is, by definition, more capable than whatever system is doing the vetting. But the attempt must be made, and it is expensive.

The vetting introduces a coordination cost that grows with both the difficulty and the time horizon of the task. A single-turn query can be vetted by inspection. A multi-step autonomous task running over hours or days generates a combinatorial explosion of possible covert pathways that must be monitored, cross-checked, and evaluated. The coordination cost does not scale linearly. It scales exponentially.

Long-horizon tasks consuming "too good" tokens eventually become prohibitively expensive, not because the tokens cost too much to generate, but because the shielding costs more than the value the tokens produce. This sets an upper boundary on usable relative superintelligence. A model can be arbitrarily more capable than its harness, but there is a point beyond which the cost of safely using that capability exceeds the alpha it generates. Past that point, the "too good" tokens are not worth deploying, regardless of how capable they are.

The Goldilocks Effect

Plot the three classes on a curve of total cost of cognition, generation plus all auxiliary costs, and the shape is striking.

On the left, "not good enough" tokens: low generation cost, high auxiliary cost. Total cost is high and unpredictable, dominated by human oversight.

In the centre, "good enough" tokens: moderate generation cost, low auxiliary cost. Total cost is the lowest of the three classes and the most predictable.

On the right, "too good" tokens: high generation cost, high and exponentially scaling auxiliary cost. Total cost is the highest of all three classes for any non-trivial task horizon.

The result is a Goldilocks effect. "Good enough" tokens generate the most alpha not because they are the most capable, but because their total cost of deployment is consistently the lowest. The cheap tokens cost too much to supervise. The brilliant tokens cost too much to contain. The tokens in the middle, the ones that match the harness, cost the least to use and therefore produce the most net value.

This is Gresham's Law restated in terms of total cost. "Good enough" tokens do not merely drive out great tokens because the sticker price is lower. They drive out great tokens because the entire cost structure is lower. The auxiliary costs of the alternatives make the "good enough" token the rational choice across the widest range of tasks and time horizons.

What This Means for the Token Market

The post-Watershed token market will not be shaped by who can generate the most capable token. It will be shaped by who can generate the token with the lowest total cost of cognition.

For commodity mints, this is good news. "Good enough" tokens served on cheap infrastructure, with predictable auxiliary costs, are the volume play. The total cost advantage compounds across every task in the "good enough" zone, and that zone is wide and widening.

For frontier labs, this reframes the strategic challenge. The frontier token's sticker price is already higher. If its auxiliary costs are also higher, because the model is capable enough to require additional vetting and containment, the total cost gap widens further. The frontier lab must either bring its tokens into the "good enough" zone for the tasks that matter, which means competing on cost against commodity mints, or accept that its market is the narrow band of tasks where "too good" capability is worth the coordination premium.

For harness engineers, total cost of cognition is the metric that matters. A harness that reduces auxiliary costs, through better checking, smarter oversight, more efficient coordination, generates alpha even if it changes nothing about the tokens it consumes. The harness is managing the total cost of using them, not just directing their expenditure.

The Upper Boundary

The coordination cost of "too good" tokens sets an upper boundary on usable relative superintelligence. This is worth dwelling on.

A token generator can be vastly more intelligent than anything we can build to contain it. But if the cost of containment exceeds the value of the output, the intelligence is unusable. The economics simply do not work.

That's a pragmatic constraint, not a theoretical one. "Too good" tokens remain unsafe. They are simply uneconomic for long-horizon autonomous deployment. For short, bounded tasks where the coordination cost is manageable, "too good" tokens may still be worth the premium. For the dark factories and flywheels that define the most productive frontier of post-Watershed economics, they are not.

The market will discover this boundary empirically, as organisations deploy increasingly capable models and find that the shielding costs eat the alpha. The boundary is not fixed. Better containment technology pushes it outward. More capable models push it inward. The boundary moves, but it does not disappear.

The safest, most productive zone of the post-Watershed economy remains the centre: "good enough" tokens, matched to the harness, generating predictable alpha at predictable cost. The Goldilocks zone. The place where the economics actually work.

Acknowledgements

This paper emerged from deep discussions with John Allsopp, and was drafted by Claude Cowork from my notes and steering. I remain responsible for any errors that may have crept in.