Gresham's Law and the Fungibility of Tokens

Gresham's Law and the Fungibility of Tokens

Mark Pesce · University of Sydney · April 2026

A brief exploration of the economics of 'good enough' tokens - Foundations of Post-Watershed Economics provides background on the topics covered here.

Abstract

Frontier AI tokens are fungible across a large and growing set of cognitive tasks - in any domain where two tokens generate equivalent alpha, rational actors will always choose the cheaper one. Applying Gresham’s Law to post-Watershed token economics, this paper argues that “good enough” tokens will drive out great tokens, creating massive demand for commodity mints serving open-weight models on cheap infrastructure while confining frontier labs to a shrinking luxury market. The winners in the “good enough” mint are determined not by model quality but by physical infrastructure advantages - the same logic that governs the rest of the post-Watershed economy.

Not all tokens are created equal.

A token generated by Opus 4.6 is self-evidently higher quality than a token generated by GPT-3.5. We use benchmarks to demonstrate this - leaderboards, Elo scores, coding evaluations - but benchmarks are proxies. The real measure of a token's value is the alpha it generates when put to work. And there is no question that frontier model tokens are better at generating alpha than the frontier models of three years ago.

But taken as a group, are all frontier tokens of equal value? This is a more nuanced question. One frontier token does not precisely equal another in a given task within a given harness. Frontier tokens are fungible - until they're not.

An Opus 4.6 token and a GPT-5.4 token are likely to generate equivalent amounts of alpha on a programming task. Both can take a specification and build a working application. Both can sustain autonomous work across the task horizons that matter. In programming, the tokens are interchangeable. But in design, in legal reasoning, in tasks that require sustained aesthetic judgment or domain-specific nuance, the equivalence breaks down. One model may generate meaningfully more alpha than another. The fungibility is task-dependent and harness-dependent.

In any domain where two tokens generate the same alpha, a rational market participant will always choose the cheaper one. This is not a preference. It is arithmetic. If two tokens produce equivalent output and one costs half as much, every unit of spend on the expensive token is alpha destroyed. The rational actor maximises alpha by minimising token cost, holding output quality constant.

This creates a structural demand pattern. While there will always be buyers for the highest-quality tokens - call them luxury goods, available to those with the capital to absorb the alpha loss in exchange for marginal quality improvements - the overwhelmingly larger market is for "good enough" tokens: satisfying a sufficient threshold of alpha generation at a cost significantly below the frontier price.

This is not a new economic pattern. It sits somewhere between arbitrage and investment maximisation. You do not hire a Senior Counsel for a parking fine. The task defines the required quality; the market finds the cheapest provider at that quality level. Tokens are no different.

The implication for the mint is direct: one of the best businesses to be in right now is generating "good enough" tokens.

The costs of running a "good enough" mint are far lower than generating frontier tokens. You do not need the latest NVIDIA silicon. You do not need the research team. You do not need the training runs that cost hundreds of millions. Open-weight models - Qwen 3.5, GLM-5.1, MiniMax-m2.7 - are approaching frontier performance on the tasks where fungibility holds. They are “good enough”. The capital expenditure to serve them is a fraction of what Anthropic or OpenAI spend to serve theirs.

At a lower price-point per token, demand mushrooms. Jevons paradox applies with particular force here: every reduction in the cost of a "good enough" token makes it economic to attempt tasks that were previously too expensive, generating new demand that more than absorbs the cost reduction. The cheap mint does not cannibalise the expensive mint's market. It creates a market the expensive mint could never serve.

However, competitive pressures in the "good enough" mint are fierce precisely because the barriers to entry are low. If you can serve open-weight models at commodity prices, so can everyone else. Margins will compress toward the cost of the scarce inputs - compute, energy, memory, bandwidth. The winners will not be determined by the quality of their tokens (which, by definition, are fungible) but by advantages in input infra: cheaper energy, better hardware utilisation, more efficient serving stacks, favourable geography.

The "good enough" mint is an infrastructure business, not a model business. Its moat is physical, not cognitive. Which means it follows the same logic as the rest of the post-Watershed economy: value accrues to whoever controls the scarce physical input.

For the frontier labs, this creates an uncomfortable strategic position. Their tokens are better - genuinely, measurably better on the tasks where fungibility breaks down. But the set of tasks where fungibility breaks down may be smaller than they need it to be. If 80% of token demand is for tasks where "good enough" is good enough, the frontier labs are competing for 20% of the market at 10x the cost structure. That is a luxury goods business. It can be profitable. It cannot be dominant.

The frontier labs know this. It is why they are racing to reduce their own costs, why they pursue efficiency research alongside capability research, why they offer tiered pricing with smaller, cheaper models alongside their flagships. They are trying to be both the luxury brand and the commodity provider. History suggests this is very difficult to sustain. Louis Vuitton does not also make backpacks for Kmart.

There is a further implication for harness engineering. If tokens are fungible within a domain, the harness becomes the differentiator - the thing that extracts more alpha from the same commodity token. A well-engineered harness that wrings 20% more alpha from a cheap token is more valuable than a poorly engineered harness running on an expensive one. The harness layer does not just orchestrate token expenditure; in a world of fungible tokens, it is the alpha.

But "The Bitter Lesson" applies here too. As models improve, the harness advantage erodes. The fungibility frontier expands. Tasks that required frontier tokens last year require only "good enough" tokens this year. The luxury market shrinks. The commodity market grows. The harness that extracted alpha from quality differentials finds that the differentials have narrowed beneath it.

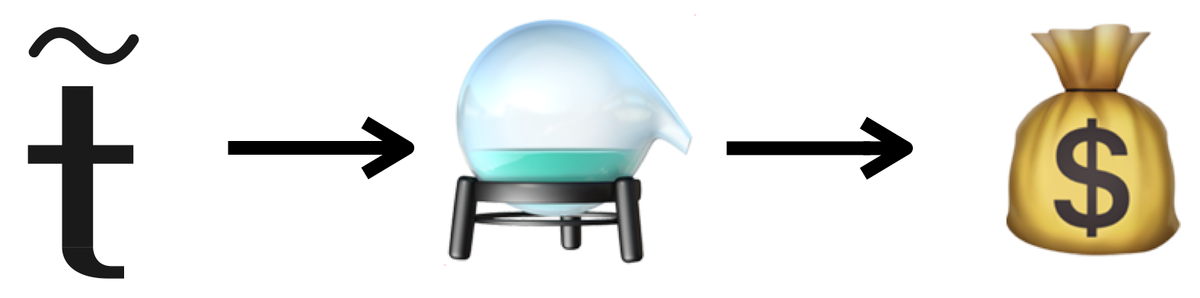

The adaptation of Gresham's Law to post-Watershed economics, then, is this:

"Good enough" tokens drive out great tokens.

Not because the market cannot tell the difference, but because in every domain where the difference does not make a difference - and that set of domains is growing, relentlessly, by the week - rational actors will choose the cheaper token every time. The expensive token is not debased. It is simply unnecessary. Wasted alpha.

The mint that prints "good enough" tokens at scale, on cheap infrastructure, with efficient serving - that is the business that captures the volume. The frontier lab that prints exquisite tokens for the shrinking set of tasks that demand them - that is the business that captures the margin. Both can exist. But the volume business is the one that shapes the economy.

Gresham understood this in 1558. The principle has not changed, but the currency has.