Foundations of Post-Watershed Economics

Dropping a new paper this morning - if your eyes start glazing over, here's the tl;dr: The cost of cognition has collapsed - economics and business urgently need to reorganise activities around that reality.

Have a read - very interested in your feedback!

Mark

Crossing the Watershed

Foundations of Post-Watershed Economics

Mark Pesce · University of Sydney · April 2026

Abstract

From mid-November through early December 2025, back-to-back releases of frontier AI models - Gemini 3 Pro, Opus 4.5 and GPT-5.2 - crossed a threshold: coding and reasoning agents became good enough for sustained, autonomous, long-task-horizon work. This is ‘The Watershed’. Post-Watershed, the marginal cost of cognitive work approaches zero - not as a gradual efficiency gain but as a phase change in the unit economics of thought. This paper argues that post-Watershed economics are best understood as a near analogue of monetary economics: infrastructure mints units of cognition (tokens); harnesses spend those tokens seeking alpha; and alpha can only come from sources the currency itself cannot buy - physical assets, relationships, regulatory position, taste, trust. The framework predicts super-exponential growth in token demand, rapid depreciation of businesses priced on old cognitive-cost assumptions, durable value in physical infrastructure, and structural advantage for small, Greenfield organisations built entirely within the new ground condition.

1. Introduction

From mid-November 2025, three frontier AI models shipped in rapid succession: Google Gemini 3 Pro, Anthropic Claude Opus 4.5 and OpenAI GPT-5.2. Coding and reasoning agents crossed the threshold from minutes of autonomous work to hours. This is ‘The Watershed’: the discrete moment when the cost of cognitive work collapsed.

When cognitive work - analysis, synthesis, coding, planning, drafting, reasoning - approaches zero marginal cost, the economics of every business change. Not incrementally. Completely. The historical analogy is the introduction of the mechanical loom: a technology that obliterated an entire class of economic actor. The cottage weavers of the eighteenth century were not reorganised around the new tool; they were replaced by it. Their skill - the labour they sold - became an activity performed by machines cheaper, faster, and at scale. Knowledge workers resemble the cottage weavers of the twenty-first century.

This paper proposes a new framework for the economics that follow from The Watershed. It takes near-zero-cost cognition as a ground condition - a state that has already come to pass - and works out what follows.

First, a quasi-monetary model: Post-Watershed, units of cognition function as currency. Infrastructure mints that currency - tokens. Harnesses spend it, seeking returns. This structural reframing shows that the dynamics of token supply, token inflation, and the search for alpha follow monetary logic, not production logic.

Second, a thesis: "long infrastructure, short spoon." Infrastructure compounds in value because it controls the token supply and cannot be replicated quickly. Applications, products, and services priced on the old cost basis of expensive human cognition - ‘spoons’ - are structurally overvalued, denominated in a currency being hyperinflated to near zero value. This is a direct economic consequence of the monetary model.

Third, a framework for identifying where value accumulates and where it is competed away. The post-Watershed business question is not "how do I use AI well?" It is: "What do I have that tokens can't buy, and how do I spend tokens to amplify it?" Businesses that can answer these questions clearly will flourish. Businesses whose only asset is human thinking - whose entire value proposition is cognitive - have nothing that tokens can't buy. They are spoons.

Section 2 identifies the Watershed as a discrete event and distinguishes it from adjacent concepts. Section 3 develops the infrastructure-as-mint model and the economics of token generation. Section 4 introduces harnesses, alpha, and the taxonomy of non-mintable value. Section 5 examines the feedback dynamics - Jevons Paradox applied to cognition, the Bitter Lesson's implications for harness decay, and the time-preference created by token inflation. Section 6 draws out the implications for pre- and post-Watershed businesses. Section 7 concludes with what the framework predicts and where it is honest about its limits.

This paper is not about AI adoption, digital transformation, or how to use AI tools well. It does not concern itself with pre-Watershed businesses beyond asking how they might best adapt to new economic realities in a Post-Watershed world.

2. Identifying the Watershed

From mid-November to early December 2025, three frontier AI models shipped in rapid succession: Google's Gemini 3 Pro, Anthropic's Opus 4.5, and OpenAI's GPT-5.2. Each, independently, crossed the same threshold. Coding and reasoning agents went from being useful for tasks measured in minutes to being ‘good enough’ for tasks measured in hours - sometimes days. Autonomous, sustained, long-task-horizon work became routine.

This is ‘The Watershed’. It is a discrete event, not a gradient. Functionally defined: The Watershed is the moment when deploying tokens against a cognitive task reliably generates alpha - returns exceeding the total cost of deployment, including the tokens, the harness, and the human attention required to verify the output. Before the Watershed, this was true for narrow cases. After it, this becomes the default condition across the broad class of knowledge work. The comparison set shifted: you no longer ask whether AI can do the job well enough. You ask whether a human can justify the premium.

Before The Watershed, an AI agent could write a function, summarise a document, or answer a question. After The Watershed, an agent could take a specification and build a working application, migrate a production website overnight, or conduct a multi-day research programme with minimal human intervention. The difference is not one of degree. An agent that works for five minutes and an agent that works for five hours are separated by the same gap that separates a calculator from an accountant.

Why "Good Enough" Matters More Than "Best"

The threshold that matters is not "best available" but "good enough for real work." Rich Sutton's "The Bitter Lesson"[1] - that general capability plus compute always beats specialised engineering - predicts exactly this moment. The Watershed is not the moment AI became superhuman. It is the moment general-purpose AI became good enough to sustain autonomous work across the task horizons that constitute actual jobs.

"Good enough" is defined by task horizon, not benchmark scores. Pre-Watershed models topped leaderboards but could not hold context, maintain coherence, or recover from errors across a sustained piece of work. Post-Watershed models are not perfect - they hallucinate, they lose the thread, they sometimes need correction. But they can be given a brief and left to execute. That is the threshold that changes everything, because it is the threshold at which the cost of cognitive work collapses.

The Wile E. Coyote Condition

In the Warner Bros. cartoons, Wile E. Coyote regularly runs off the edge of a cliff and keeps running - legs pumping, perfectly fine - until he looks down. The moment he recognises there is no ground beneath him, gravity takes hold and he falls.

Every business formed before the Watershed is in exactly this position. They are operating on assumptions about the cost of cognitive work that are no longer true. They have not yet looked down. The repricing has not happened. But it will.

Imagine a consultancy that deploys AI agents to make its existing client engagements more efficient - faster reports, quicker turnarounds, same billing structure. This is the canonical error: using post-Watershed tools to preserve pre-Watershed structures. The agents do not make the consultancy more valuable. They make the consultancy unnecessary, because the client can now deploy those same agents directly. Every efficiency gain the consultancy captures is a capability the client will shortly internalise. The consultancy is running in mid-air.

The gap between pre-Watershed assumptions and post-Watershed reality widens geometrically, not linearly. Every week that passes, the cost of cognitive work falls further. Every week that passes, the businesses priced on the old cost basis become more overvalued. The repricing will not be gradual. It will be - as Hemingway had it - “Gradually and then suddenly.”[2]

What the Watershed Is Not

The Watershed is not AGI. It is not superintelligence. It is not the Singularity. It makes no philosophical claim about machine consciousness or the nature of intelligence. It is the mundane, practical, measurable moment when the cost of cognitive work collapsed - and the economics that follow from that are what matter, not the metaphysics.

3. Infrastructure and Token Generation

The Mint

Infrastructure produces tokens - units of cognition.

A caveat: the monetary metaphor illuminates the structure of post-Watershed economics - minting, spending, inflation, alpha - but like all metaphors it has limits. Tokens are not literally currency, and the analogy should be pressed for insight, not mistaken for identity.

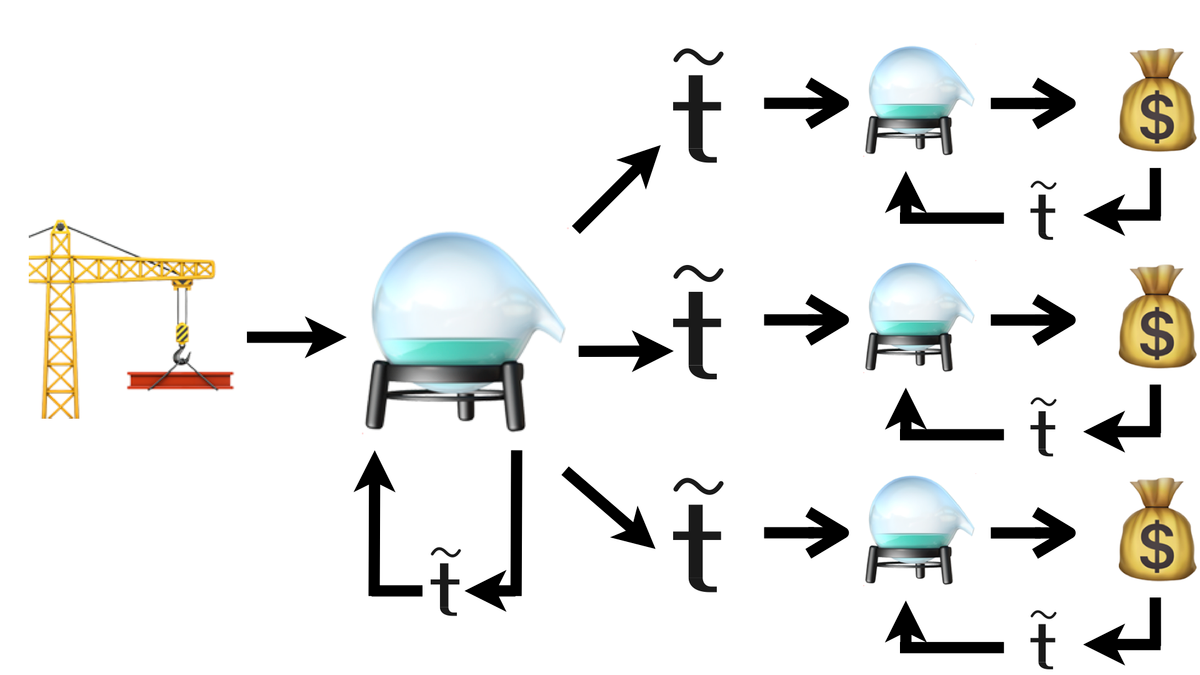

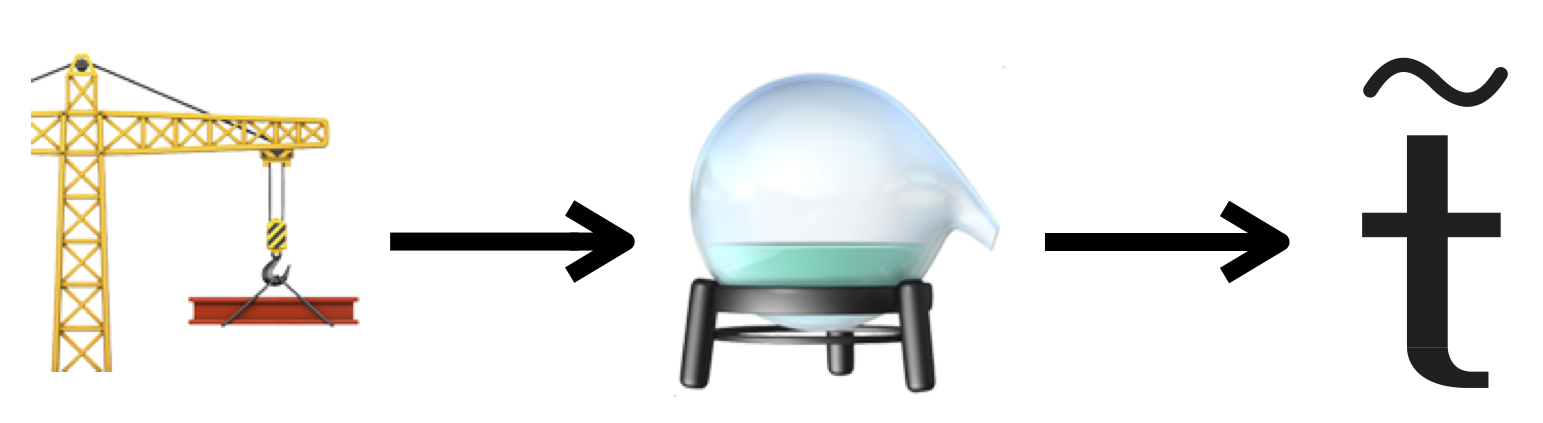

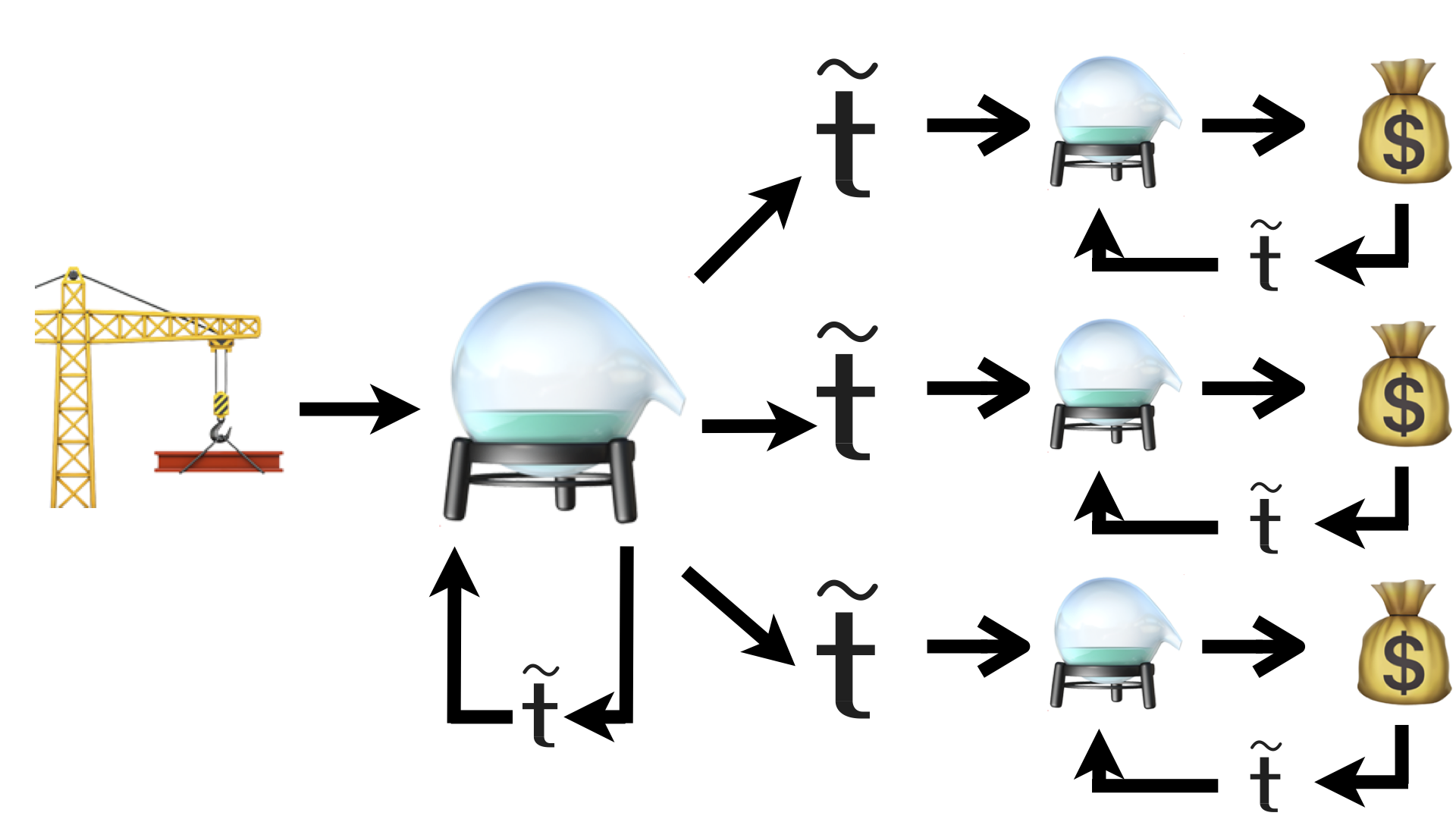

This is the minting function. The equation is simple:

Infrastructure + Process → Tokens

The infrastructure stack runs deep into physical reality: semiconductor fabrication (dominated by TSMC, dependent on helium supply chains), memory (Micron, SK Hynix - with revenue tripling year-on-year[3]), GPU design (NVIDIA, projecting up to $1 trillion in revenue from Blackwell and Vera Rubin[4]), data centres, cooling systems, energy generation. Each layer has its own economics, its own scarcities, and its own lead times. A new fab takes three to four years to reach full capacity. A data centre takes eighteen months to two years. None of this can be conjured from a prompt.

The mint is physical.

Token Supply

Token generation is scaling super-exponentially. More capacity comes online every quarter. Models become more efficient - the DeepSeek thesis, demonstrated in early 2025,[5] proved that competitive capability could be achieved on far smaller compute clusters than anyone assumed. Open-weight models (Qwen 3.5, GLM-5) are approaching frontier performance at commodity prices. The cost per token is falling.

Total demand is rising far faster than costs are falling. Agents consume tokens to produce more agents consuming more tokens. At barely 1-2% adoption among the world's knowledge workers, infrastructure providers are already showing the strain - outages, rate limiting, time-of-day demand shaping. Anthropic doubled usage limits during off-peak hours to manage capacity. Codex went down under load. These are not anecdotes. They are demand signals at trivially low adoption rates, pointing toward a token supply crisis that will define the next decade of infrastructure investment.

The Binding Constraint Shifts

Pre-Watershed, the scarce input to economic activity was human cognition. Organisations acted as ‘harnesses’, coordinating expensive human thinking. Post-Watershed, cognition is abundant. The binding constraint shifts to physical reality: compute, energy, memory, fabrication, raw materials.

This is a well-understood economic response to a factor-cost collapse. When one input becomes cheap, value migrates to whoever controls the input that is still scarce. The history of industrialisation is a history of binding constraints shifting: from labour to capital, from capital to energy, from energy to information. Now the shift is from cognition to the physical infrastructure that ‘mints’ cognition.

The infrastructure stack - energy and raw inputs at the base, then semiconductors, then memory, then GPUs, then data centres, then cloud and API providers at the top - is where value accumulates. Each layer has its own bottlenecks. Energy costs are volatile and geopolitically exposed. TSMC is effectively a single point of failure for leading-edge fabrication. RAM grows arithmetically, not exponentially, making memory the quiet binding constraint that limits how much context an agent can hold and how many agents can run simultaneously. These are real, physical scarcities. They cannot be tokenised away.

"Long Infra"

Infrastructure compounds in value as demand scales, compounding exponentially during periods of super-exponential growth. Infrastructure cannot be replicated quickly. It has physical, regulatory, and capital barriers to entry. The mint is always valuable because it controls the money supply.

Data centres overtaking office construction as the dominant commercial building type is a civilisational signal. We are rebuilding physical infrastructure around computation, leaving desks behind as uneconomic. The businesses that own the mint - or any of the scarce inputs the mint depends on - are positioned on the right side of the most important economic shift of the century.

"Long infra" is not investment advice. It is an observation about where value must accumulate when cognition is no longer the scarce factor of production. The mint is durable. Everything downstream of the mint is subject to the inflationary dynamics the mint creates.

4. Harnesses and Value Generation

The Spending Side

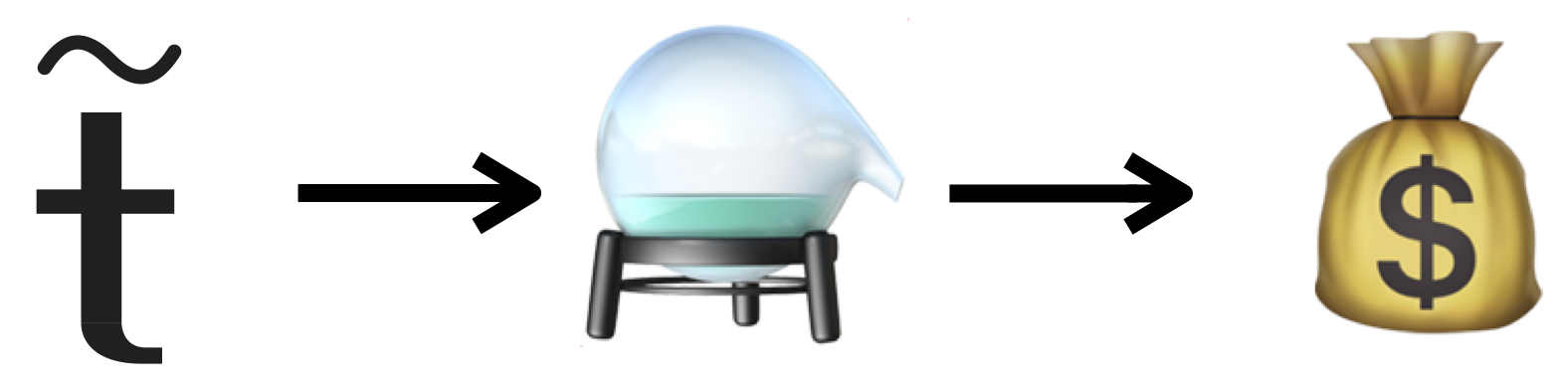

If infrastructure is the mint, harnesses are the spenders. A harness is anything that consumes tokens and directs them toward an outcome. The equation:

Tokens + Process → Value

The process is almost always a harness. Cursor, Claude Code, Claude Cowork, Codex, Google Antigravity, OpenClaw - these are the obvious examples, tools purpose-built to channel token expenditure into working software, analysis, or content. But a harness is not necessarily a product. Any workflow, process, or organisational structure that directs token expenditure toward a result is a harness. A well-written prompt is a harness. A startup's operating model is a harness. A human being deciding what to point the tokens at is a harness.

Alpha

Alpha is excess return - value generated above what raw, undirected token expenditure would produce. A harness that merely passes tokens through without amplification generates zero alpha. The harness earns its existence by making each token worth more than it would be on its own, using this surplus to fund further token purchases.

Here is the critical insight: alpha cannot be cognitive. Cognition is what the currency is. You cannot earn excess returns denominated in the thing you are already spending. If your competitive advantage is "we think better" - better analysis, better code, better strategy - that advantage is being minted by the trillion, cheaper every day, by every infrastructure provider on the planet. Cognitive alpha is a depreciating asset approaching zero.

Alpha must come from something the currency cannot buy. It must be non-mintable.

Sources of Non-Mintable Alpha

Incumbency. Installed base, existing contracts, switching costs, the brute fact of already being there. Incumbency is real alpha - but it depreciates. Tokens erode switching costs over time. When a startup can rebuild your product from a prompt, the lock-in that protected you becomes the lock-in that traps you.

Physical assets. Land, ports, mines, spectrum, data centre locations, warehouse networks. These cannot be tokenised. A token can optimise your logistics; it cannot manufacture a port.

Relationships. Trust between specific humans, reputation, networks built over years. Tokens can facilitate relationships - draft the email, schedule the meeting, analyse the stakeholder map - but they cannot fabricate the trust that makes a handshake worth something.

Regulation. Licences, permits, compliance requirements. Tokens can navigate regulation - they can draft the filing, model the compliance requirements, automate the reporting. But they cannot create regulatory position from nothing. The licence to operate a bank, the spectrum allocation, the pharmaceutical approval - these are granted by states, not minted by machines.

Taste. Curatorial judgment, aesthetic sense, cultural positioning. The human capacity to know what is good, not merely what is correct. Tokens can generate a thousand options; taste is knowing which one matters. This is not optimisation - optimisation is mintable. Taste is the thing that decides what is worth optimising in the first place. However, taste comes with a caveat: "The Bitter Lesson" predicts that general capability plus compute erodes the unique value of taste over time. Taste-as-selection - picking the best from a menu - is likely mintable. Taste-as-direction - deciding what menu to create - may prove more durable, but it would be unwise to bet the framework on it.

Trust. Trust is infrastructure. Verification, safety, accountability in agentic systems form a foundational layer that agents require to operate but cannot self-generate. When an agent acts on your behalf - moves money, signs a contract, makes a commitment - someone must be accountable. That accountability is human and institutional, not computational.

The Post-Watershed Business Question

The question is not "how do I use AI well?" Every business will use AI. That’s table stakes, not strategy.

The question is: "What do I have that tokens can't buy, and how do I spend tokens to amplify it?"

A mining company has physical assets. It spends tokens to optimise extraction, logistics, and exploration. The tokens amplify non-mintable alpha. A law firm with deep relationships in a specific jurisdiction has relational alpha. It spends tokens to automate the commodity legal work, freeing partners to do the thing tokens cannot: maintain trust with clients who are making consequential decisions.

Businesses that can answer this question clearly will flourish. Businesses that cannot - whose entire value proposition is cognitive work, whose only asset is expensive human thinking - have nothing that tokens cannot replicate, cheaper and faster, every passing week.

"Short Spoon"

Applications, products, and services priced on the old cost basis of expensive human cognition are structurally overvalued, denominated in the old currency - human cognitive labour - and that currency is being hyperinflated away. This is what "spoon" means in this framework:[6] any artefact of the pre-Watershed economy whose existence depends on cognition being expensive.

An accounting SaaS at $100 per month is a spoon - when the same functionality can be built from a prompt. A document-signing platform at $450 per team is a spoon - when a competitor offers the same service at $19 per organisation using post-Watershed economics.[7] An enterprise software consultancy billing by the hour for work that agents now complete in minutes is a spoon.

The intermediation layers that connect producers to consumers - the platforms, the exchanges, the marketplaces, the middlemen - have always been institutional responses to the high cost of coordination. That cost has collapsed. These spoons assumed a permanence they never possessed. Because they had always been around, we assumed they were infrastructure. They’ll disappear - gradually and then suddenly.

5. Token Feedbacks

Jevons Paradox Applied to Cognition

In 1865, William Stanley Jevons observed that as the efficiency of coal use improved, total coal consumption did not fall - it rose, dramatically.[8] Making a resource cheaper to use does not reduce demand. It unleashes it.

The same dynamic applies to cognition, but faster. When the cost of cognitive work collapses, demand does not merely increase - it explodes. Agents consume tokens to produce more agents consuming more tokens. The first-order consequence of good-enough agents is an exponential proliferation of agents. The first-order consequence of that is a super-exponential increase in token consumption.

This is not speculative. It is already visible. At barely 1-2% adoption among knowledge workers, infrastructure providers are straining under load. As adoption climbs - and as agents begin spawning agents autonomously - the demand curve steepens into something the infrastructure stack has never had to accommodate. Every improvement in model efficiency that reduces the cost per token makes it economic to attempt tasks that were previously too expensive, which generates new demand that more than absorbs the efficiency gain. Jevons, exactly as predicted.

AutoResearch: Tokens Generating Knowledge

A specific and consequential feedback loop: tokens spent on research generate knowledge that makes future token expenditure more productive. An agent that surveys a field, synthesises findings, and identifies gaps produces an input that sharpens the next round of agent work. This is "The Bitter Lesson" operating in real time - general models plus massive token expenditure beat specialised domain expertise.

The implication for proprietary knowledge as a competitive moat is uncomfortable. Organisations that believe their accumulated expertise is irreplaceable are making a bet against "The Bitter Lesson." Enterprise fine-tuning - the practice of training models on proprietary data to capture institutional knowledge - may itself be a spoon. If a general model plus sufficient token expenditure can reconstruct the useful parts of that knowledge from first principles and public data, the fine-tuned model is not a moat. It is a shortcut with a shelf life.

The Bitter Lesson and Harness Decay

"The Bitter Lesson" predicts that harness alpha decays over time. As models become more capable - better at tool-calling, at self-orchestration, at reasoning about what context they need - the harness layer gets absorbed into the model itself.

This is already observable in small ways. An agent that independently installs a tool it needs to complete a task is performing work that a harness would previously have orchestrated. An agent that reasons about which APIs to call, in what order, with what parameters, is absorbing harness logic into its own cognition. Each capability improvement in the model is a capability subtracted from the harness.

This does not invalidate harness engineering as a near-term source of alpha. The current generation of harnesses - Cursor, Claude Code, Codex, OpenClaw - generate real value by bridging the gap between what models can do in principle and what they can do in practice. But "The Bitter Lesson" puts a time horizon on that value.

The directionality is clear: harnesses are spoons. Models are infrastructure.

The Flywheel

The system is not two equations running in parallel. It is a flywheel.

Tokens improve the process that produces tokens. The mint spends its own currency to improve the mint. Metacognitive self-modification - models contributing to the research that produces better models - is the token economics loop expressed as an engineering practice. The process that produces tokens is itself consuming tokens to improve, which produces better tokens, which improve the process further.

Tokens + Process → More/Better Tokens → More/Better Process → More/Better Value → ...

This is why the economics feel monetary rather than productive. In production economics, inputs are consumed. In this system, the currency amplifies itself through use. That is not a factory. That is compound interest - left to run, the most powerful force in economics.

6. Implications for Pre- and Post-Watershed Business

Pre-Watershed Businesses

Every business formed before the Watershed is priced on the assumption that cognitive work is expensive. That assumption is now false.

The NBER Working Paper 34836 quantifies the denial: 69% of firms report using AI, yet over 80% report no impact on productivity.[9] Average executive AI use is 1.5 hours per week, with a quarter reporting none at all. The Deloitte "State of AI in the Enterprise" report confirms the pattern from the other side: only 25% of companies have moved even 40% of their AI experiments into production, and only 34% are using AI for deep business transformation.[10] These firms are not failing to adopt the technology. They are failing to reorganise around what the technology makes possible.

This is the Wile E. Coyote condition at enterprise scale. The organisations that look strongest - record quarterly revenues, growing cloud commitments, expanding AI budgets - may be experiencing what Carlota Perez calls the installation phase: the period when transformative technology spending flows through existing channels precisely because those are the channels that exist.[11]

Brownfield systems - the accumulated sediment of pre-Watershed processes, software, and organisational structures - are structurally disadvantaged. They cannot be incrementally adapted. The coordination cost of an organisation is fixed, not marginal: you cannot add AI to a process designed for humans and expect transformation. You either redesign the entire workflow or you capture marginal value that does not justify the disruption. The gap between adapted and legacy widens geometrically, not linearly, because each improvement in model capability makes the legacy approach comparatively worse while simultaneously making the redesigned approach comparatively better.

An infrastructure company that targets a 10% productivity improvement from AI tools is issuing itself a death sentence: the floor plan stays the same. Seventy per cent means tearing up the floor plan - and that is where the value is.

Post-Watershed Businesses

Post-Watershed businesses take near-zero cognitive cost as a ground condition, not an aspiration. They are designed from scratch for a world in which the expensive input is not thinking but everything that is not thinking.

They are small. AI enables individuals and very small teams to do what previously required fifty-person companies. The reason is not simply that AI makes each person more productive, but that tiny teams also lack the organisational overhead that turns a tenfold capability gain into a ten per cent improvement. A solo practitioner or a three-person team has no coordination cost to absorb the gain, getting the full multiplier.

Tiny teams compete on non-mintable alpha. A post-Watershed business asks: what do we have that tokens cannot buy? It then spends tokens - aggressively, at scale - to amplify that thing. The mining company spends tokens on exploration analytics; the alpha is the mineral rights. The boutique law firm spends tokens on contract automation; the alpha is the client relationships. The design studio spends tokens on rapid prototyping; the alpha is taste - with the caveat that "The Bitter Lesson" may erode even that over time.

Software itself shifts from product to content - generated on demand, tailored to the moment, shared socially rather than sold commercially, ephemeral rather than permanent. When software can be manufactured from a prompt, it stops being an asset. The entire valuation model for software companies rests on software being expensive to produce. That premise is gone.

The New Balance Sheet

Every business has a balance sheet that can be read through the lens of this framework.

On one side: assets denominated in cognitive capacity. Team size, expertise depth, process intellectual property, proprietary algorithms, institutional knowledge. These are depreciating rapidly. They are denominated in the currency being inflated away.

On the other side: assets denominated in non-mintable alpha. Physical infrastructure, trust relationships, regulatory positions, cultural capital, installed base. These are appreciating - not because they have intrinsically become more valuable, but because everything denominated in cognition is becoming less valuable around them.

The strategic question for any business is simple: What fraction of your balance sheet is cognitive, and what fraction is non-mintable? The answer determines whether you are long infrastructure or short spoon - whether the Watershed is an opportunity or an extinction event.

Infrastructure Companies and Their Spoons

Even the infrastructure companies - the ones positioned on the right side of the framework - carry spoon dependencies internally. SAP, Oracle, and Microsoft run their own operations on layers of pre-Watershed software and processes. The most valuable near-term opportunity may not be building post-Watershed businesses from scratch but helping infrastructure companies eliminate their internal spoon dependencies. The consultancy that helps a data-centre operator replace its legacy procurement, HR, and compliance systems with post-Watershed equivalents is positioned at the intersection of durable demand and structural necessity.

7. Conclusions

The Framework

The Watershed is the discrete moment when cognitive work approached zero marginal cost. It happened in late 2025. It is not a metaphor, a forecast, or a thought experiment. It is a condition.

Post-Watershed economics resemble monetary economics. Infrastructure mints tokens - units of cognition. Harnesses spend those tokens seeking alpha. Alpha can only come from sources the currency itself cannot buy: physical assets, relationships, regulatory position, taste (with caveats), and trust. "Long infrastructure, short spoon" is the direct economic expression of this model.

The post-Watershed business question is not "how do I use AI well?" It is: "What do I have that tokens can't buy, and how do I spend tokens to amplify it?"

What This Framework Predicts

Super-exponential growth in token demand. Jevons paradox applied to cognition means that every reduction in the cost of cognitive work generates more than proportional new demand. The infrastructure required to meet that demand will be the defining capital expenditure of the coming decade.

Value migration from cognitive assets to physical and relational assets. Anything denominated in human cognitive labour is depreciating. Anything non-mintable is appreciating, relatively if not absolutely.

Rapid depreciation of businesses priced on pre-Watershed assumptions. The Wile E. Coyote condition is general. The repricing will happen gradually, then suddenly.

Harness alpha decay. "The Bitter Lesson" predicts that the harness layer - the current generation of tools that orchestrate and direct token expenditure - will be progressively absorbed into the models themselves. Harness engineering is a real and valuable near-term practice. It is not a durable moat.

Structural advantage for small, greenfield organisations. Tiny teams get the full multiplier because they lack the coordination cost that absorbs capability gains in larger organisations. Post-Watershed businesses designed from scratch will outperform adapted pre-Watershed businesses by a margin that widens over time.

Infrastructure as the durable store of value. The mint endures. The things the mint produces are spent, inflated, competed away. The mint itself - and the physical, scarce inputs it depends on - compounds.

What This Framework Does Not Predict

Timing. "Gradually, and then suddenly" is a shape, not a schedule. The framework identifies what must happen without claiming to know when. The repricing of pre-Watershed businesses could take two years or ten. The absorption of the harness layer could be rapid or slow. The framework describes direction, not velocity.

Specific winners. The framework identifies categories - infrastructure, non-mintable alpha, post-Watershed greenfield - but not which specific companies within those categories will flourish. Execution still matters. So does timing. So does luck.

Completeness. The demand-side dynamics need further development. The taxonomy of non-mintable alpha is provisional - there may be sources of alpha not yet identified, and some listed here may prove less durable than expected. The quasi-monetary model is a framework, not a finished theory.

Acknowledgements

Without the continuing input of my friend and noops collaborator John Allsopp, this paper would never have come together - or would have taken much longer to arrive. I am indebted to him for that support.

Viveka Wiley, Hugo O’Connor and Rob Manson all received early drafts of this paper; their wise comments helped me to refine my arguments in significant ways. My thanks to them.

This paper was composed using Claude Cowork. I remain responsible for any errors that may have crept into it.

Notes

- Richard Sutton, "The Bitter Lesson," 13 March 2019. http://www.incompleteideas.net/IncIdeas/BitterLesson.html ↩︎

- Ernest Hemingway, The Sun Also Rises (New York: Scribner, 1926). "How did you go bankrupt?" "Two ways. Gradually and then suddenly." ↩︎

- Micron Technology, Inc., Q2 FY2026 Earnings Release, March 2026. Revenue of $23.86 billion, up from $8.05 billion in Q2 FY2025. ↩︎

- NVIDIA GTC 2026 Keynote, Jensen Huang, 16 March 2026. Combined Blackwell and Vera Rubin revenue projections reaching $1 trillion. ↩︎

- DeepSeek demonstrated in January 2025 that frontier-competitive models could be trained for approximately $6 million using 2,000 NVIDIA H800 GPUs - a fraction of the cost assumed necessary for comparable capability. ↩︎

- "There is no spoon." The Matrix, dir. Lilly Wachowski and Lana Wachowski (Warner Bros., 1999). ↩︎

- Holosign vs DocuSign pricing comparison: Holosign at $19/organisation vs DocuSign at $15-45/user/month. ↩︎

- W. Stanley Jevons, The Coal Question: An Inquiry Concerning the Progress of the Nation, and the Probable Exhaustion of Our Coal Mines (London: Macmillan, 1865). ↩︎

- Ivan Yotzov, Nicholas Bloom et al., "Firm Data on AI," NBER Working Paper 34836, February 2026. Survey of approximately 6,000 senior business executives across the US, UK, Germany, and Australia. ↩︎

- Deloitte, "The State of AI in the Enterprise: The Untapped Edge," January 2026. Survey of 3,235 respondents across 24 countries. ↩︎

- Carlota Perez, Technological Revolutions and Financial Capital: The Dynamics of Bubbles and Golden Ages (Cheltenham: Edward Elgar, 2002). ↩︎